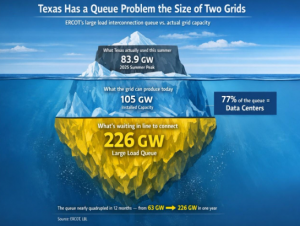

226 gigawatts.

That’s how much load is sitting in ERCOT’s large load interconnection queue right now. To put that in perspective, ERCOT’s all-time peak demand – set on a scorching August afternoon in 2023 – was 85.5 gigawatts. The entire installed generation capacity of the Texas grid is about 105 GW.

The queue is more than double the grid it’s trying to connect to. A year ago, it was 63 GW. It nearly quadrupled in twelve months.

This is a big damn deal. And not for the reason most people think.

What’s Actually in the Queue

The numbers are pretty incredible to see. About 77% of that 226 GW is data centers. Another 8.8% is crypto mining. The rest is hydrogen production and industrial facilities. The wave is overwhelmingly AI: hyperscalers, GPU colocators, and compute-cloud giants all racing to plant flags in Texas.

The context is staggering. Before Winter Storm Uri five years ago, Texas had 13 registered data centers. Today there are nearly 100. In November, Google announced $40 billion for three new ones in the state.

It’s honestly hard to even conceptualize just how much power this is… especially for a guy who’s spent most of his life in the water industry 😊

The Capital Planning Problem Nobody’s Talking About

This is where the queue story becomes an infrastructure capital story.

ERCOT just approved the Strategic Transmission Expansion Plan (STEP) — a $33 billion buildout of roughly 2,468 miles of new 765 kV transmission backbone. The first phase alone is $9.4 billion for 1,108 miles of new high-voltage lines, targeted for service between 2030 and 2032. That’s $5 billion per year in transmission investment over a six-year horizon.

These are enormous, irreversible capital commitments. Transmission lines aren’t cloud servers you can spin down. Once you pour concrete for a 765 kV corridor, it’s there for 50+ years.

Another fact that makes things even more tricky is that only 13% of projects that entered interconnection queues between 2000 and 2019 actually reached commercial operation. Seventy-seven percent were withdrawn. The median wait time has doubled from under two years to over four. We are building a multi-decade transmission backbone based on demand signals from a queue where, historically, seven out of eight projects never happen.

From my friends who are industry experts – many developers do something called ‘queue bombing’ which is stuffing as many projects in the queue as possible knowing only a handful will be accepted… and since it’s going to take so long you want to throw everything you can into the submission process.

What a mess.

Master Planning vs. Queue Management

The fundamental problem is that ERCOT – like most grid operators – has been running a reactive interconnection process (through no fault of their own… it’s hard to get in front of this stuff). Someone files a request. ERCOT studies it. Studies cascade into restudies as new requests land. The queue grows. The studies pile up. Nothing moves.

This is what the industry politely calls “structural challenges” and what I’d call a planning process designed for a world that no longer exists.

A recent analysis from PACES nailed the core issue: traditional transmission planning relies on speculative interconnection requests and top-down forecasts that don’t account for where data centers will actually be built. The result is billions in grid upgrades built to serve projects that never materialize. They found that 765,000 acres of suitable data center land exists in Texas, but only 17% sits near substations with available transmission capacity. And just five bottleneck transmission elements constrain 30% of that viable acreage.

Five elements. Thirty percent of developable land. That’s the kind of finding that should rewrite a capital plan overnight.

What PACES argues (and I think they’re right) is that we need to flip the model. Instead of reacting to individual queue requests, planners should work from the ground up: identify where data centers can actually be built (land, water, fiber, permitting), map that against grid capacity, and target upgrades where a single investment unlocks multiple viable sites. TRUE master planning, not queue management.

ERCOT’s Response: Moving Fast, But Toward What?

To ERCOT’s credit, they’re not standing still. They’ve hired McKinsey to redesign the large load interconnection process. They’ve launched two new internal organizations -Interconnection and Grid Analysis, and Enterprise Data and AI – as of January 2026. And they’re developing a Batch Study framework that would group load requests into defined batches aligned with planning cycles, allocate transmission capacity systematically, and kill the restudy death spiral.

That framework hits the PUC Open Meeting on February 20. It’s the most consequential planning reform ERCOT has attempted in years.

Meanwhile, Texas passed Senate Bill 6, requiring large load customers over 75 MW to contribute directly to interconnection and transmission upgrade costs. It’s the legislature’s answer to the stranded cost question: if you want to plug a gigawatt data center into the Texas grid, you’re going to help pay for the wires that get you there. That’s a start.

But cost allocation alone isn’t master planning. SB6 tells you who pays. It doesn’t tell you where to build, what to build first, or how to sequence $33 billion in capital against demand that might – or might not – show up.

So What

Here’s what this comes down to. ERCOT is staring at the largest demand growth event in the history of any American grid operator. The load ramps from AI data centers look like bulls seeing red flags – fast, massive, unpredictable. We’re asking a grid that nearly collapsed during a cold snap to absorb demand growth that would be aggressive for damn near every nation on earth.

And we’re making capital decisions that will shape Texas infrastructure for half a century based on a queue that quadrupled in a year and has historically been wrong 87% of the time.

The queue isn’t the plan. It was never supposed to be the plan. But in the absence of real master planning, the kind that starts with land, water, power, and capital sequencing and works backward to transmission design, it’s all we’ve got. And $33 billion is a hell of a bet to make on a wish list.

What I’m Watching

The February 20 PUC meeting and subsequent actions. If ERCOT’s Batch Study framework can turn a 226 GW free-for-all into something resembling coordinated capital planning (i.e. linking load commitments to transmission investment with real financial skin in the game) it becomes a model for every ISO in the country.

If it’s just another process layered on top of a broken system, we’ll spend the next decade building infrastructure in the wrong places for customers who never show up.

And Texas ratepayers will be the ones holding the bag.

Sources

- ERCOT’s large load queue has nearly quadrupled in a single year — Latitude Media

- ERCOT’s large load queue jumped almost 300% last year — Utility Dive

- Red-hot Texas is getting so many data center requests that experts see a bubble — CNBC

- The future of ERCOT: 765 kV transmission expansion — Enverus

- ERCOT approves $9.4B project to meet data center demand — Energy Capital

- Queued Up: Characteristics of Power Plants Seeking Transmission Interconnection — Lawrence Berkeley National Laboratory

- The grid is planning for data centers that will never exist — PACES

- Texas SB6 Establishes New Transmission Fees for Large Load Customers — Pillsbury Law

- ERCOT Announces Strategic Organizational Changes — ERCOT

- ERCOT introduces Batch Study framework for Large Load Interconnections — EPE Consulting

- Data center activity ‘exploded’ in Texas, spiking reliability risks — Utility Dive